Better Qr Codes

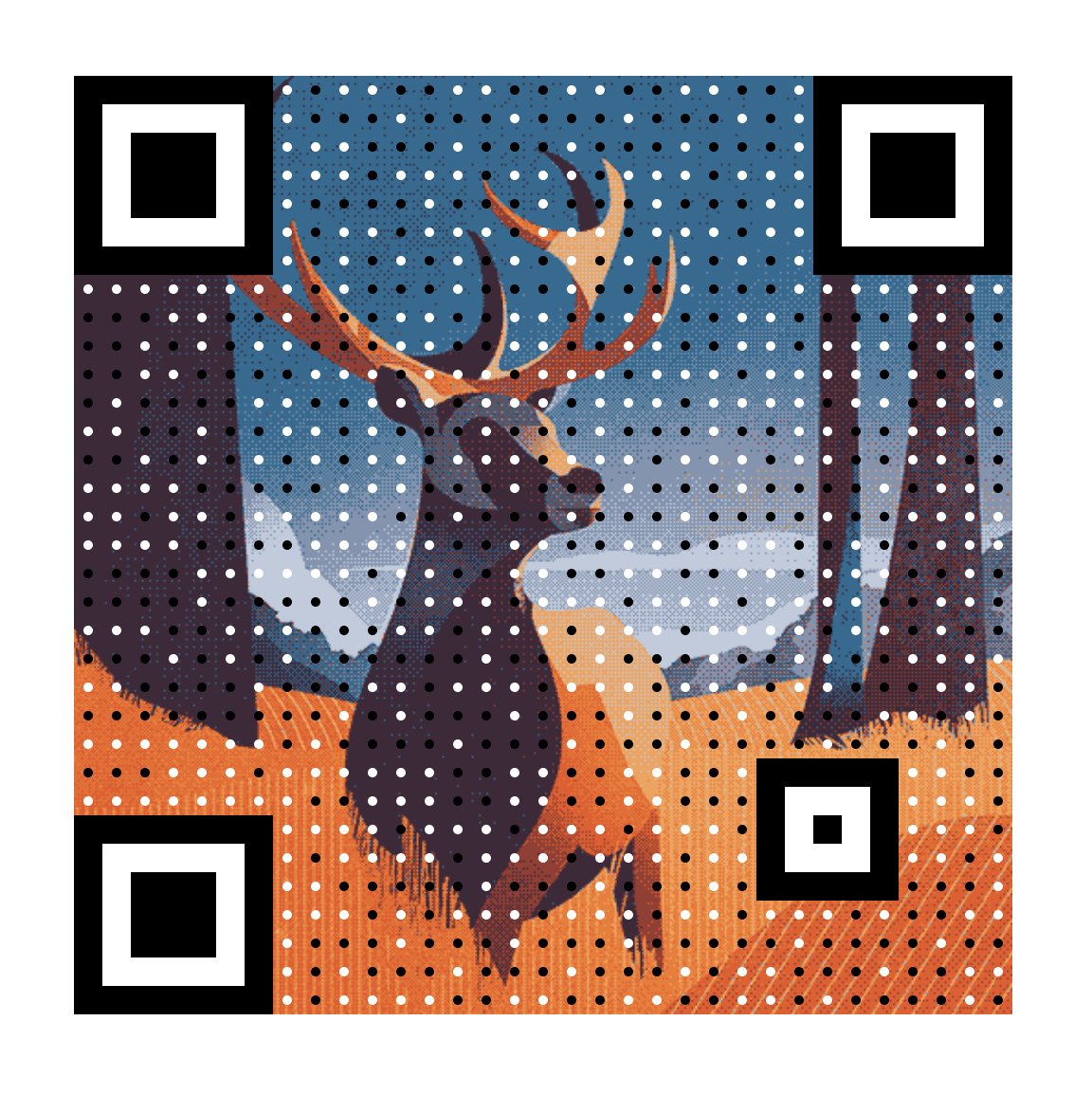

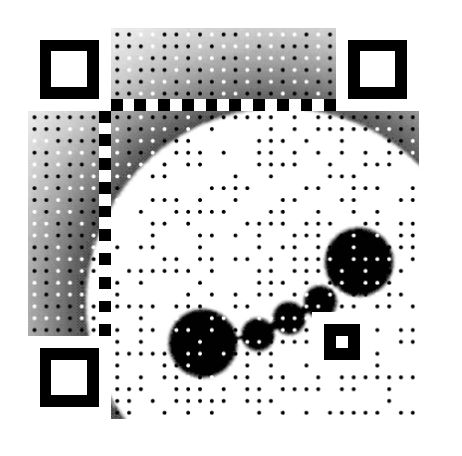

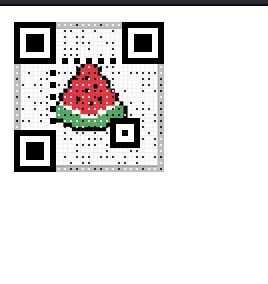

A custom Python pipeline that embeds full-color images into QR codes while maintaining scannability with standard phone cameras. The technique works by deconstructing a generated QR code into its cellular data. While preserving the critical landing markers, the pipeline reconstructs the data-carrying portions of the code as an image. It overlays this image with a pattern of fine dots, calibrated to manipulate the average luminance within each cell. This ensures that when a scanner samples the cells, it correctly reads them as light or dark, preserving the original data while making the embedded image visible to the human eye.

Related Projects

360 Visualizer

A web tool for creating immersive 360 degree panoramas with text and recorded sound for exhibitions, working in all major browsers and using device compass/gyro (or click and drag) to rotate the panorama intuitively. I developed this for the Cyprus Institute. A demo can be found here.

Unité d'Habitation Wikisurvey

A wiki survey tool—a survey format where participants both vote on and submit new options, so the survey evolves as people interact with it. This implementation draws on two prior systems (All Our Ideas and POLIS) and adds AI-assisted seed generation and automated qualitative coding. I developed this web application as part of the MetaFraming research.

CryoLumens

An AR artwork representing the strength and location of Earth's magnetic fields using NASA's real-time sensor network, overlaying data-driven particle systems on an original painting using image tracking. When viewed through a phone, the painting comes alive with particles that shift and flow based on live magnetic field data. I developed the coding and visuals for Eli Joteva.Technical: Live sensor intensities are baked into packed textures so particles animate by interpolating a texture index on the GPU, keeping the visualization real-time without CPU overhead.

Other Matter

An AR exhibition with Valerie Messini and her students at the University of Applied Arts in Vienna. To support the students' creation of interactive and reactive sculptures, I extended the Wikar platform with several new features. This included more robust QR code scanning (improving reliability for inverted codes), expanded UI customization options, and a set of "interaction primitives" that students could use for proximity-based events or custom user controls. A video of the exhibition can be seen here.